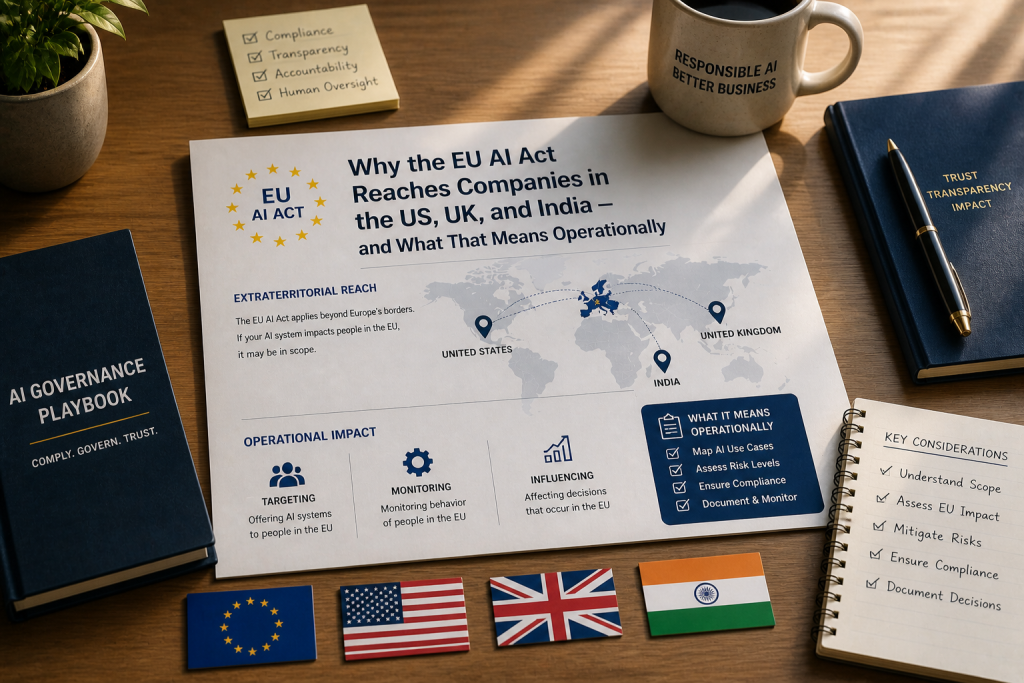

When the European Union’s AI Act entered into force on August 1, 2024, the regulation that dominated headlines was one most HR leaders read as someone else’s problem. It was framed as governance for AI labs, foundation model developers, big tech platforms. Distant. Technical. Not really about hiring.

That framing was wrong from day one. It is becoming actively dangerous as the August 2, 2026 deadline approaches.

Annex III of the AI Act lists the use cases that qualify an AI system as “high-risk” and therefore subject to the most demanding tier of compliance obligations under the regulation. The list includes biometrics, critical infrastructure, education, law enforcement, migration, and justice. It also includes — explicitly, unambiguously, and at the top of HR’s relevance — employment, workers management and access to self-employment.

For organisations that have built modern recruitment infrastructure on top of AI-powered tools, the practical implication is straightforward and uncomfortable: most of your stack is high-risk under the Act, and if any of it touches workers in the EU, the compliance clock is running.

What Counts as High-Risk in HR

The AI Act’s framework around employment-related AI is broader than many HR leaders initially recognise. Under Annex III, AI systems intended for use in any of the following contexts are classified as high-risk:

Recruitment and selection. This includes AI used to place targeted job advertisements, screen or filter applications, evaluate or rank candidates, and assess responses in screening interviews or on application platforms. The classification covers tools at every stage of the funnel — from sponsored ad targeting to final-round assessment.

Decisions about employment relationships. AI systems used to make decisions about promotion, termination, work allocation, or contract terms based on individual behaviour, personal traits, or characteristics fall within scope. Performance evaluation tools that aggregate productivity data and surface flags for HR action are caught here. Workforce analytics tools that recommend reductions or restructuring are caught here.

Monitoring and evaluation. AI used to monitor and evaluate the performance and behaviour of workers — productivity tracking, communications analysis, output assessment, attention monitoring — falls within the high-risk category.

The breadth is intentional. The AI Act’s classification is risk-based, and the EU legislators concluded that employment decisions affect fundamental rights significantly enough to warrant the highest tier of regulatory scrutiny outside the small list of prohibited practices. Those prohibited practices include some directly relevant to HR: emotion recognition systems in the workplace and biometric categorisation based on sensitive attributes are completely banned, with limited exceptions.

The result is a regulatory framework where, for employment purposes, the question is rarely “is this AI tool subject to the Act?” The question is “what specifically does the Act require of us in deploying it?”

Mapping the Act to Real HR Technology Stacks

Take a typical modern HR technology stack and apply the Annex III classification. The picture clarifies quickly.

The applicant tracking system with AI-powered candidate matching: high-risk. The resume parser that scores candidate fit against role requirements: high-risk. The video interview platform that uses AI to analyse responses: high-risk and, depending on whether it includes emotion or sentiment analysis, potentially crossing into prohibited territory. The talent intelligence platform that scores candidates’ “likelihood of success”: high-risk.

Continuing into the employment relationship: the productivity analytics tool aggregating data across communications and document systems: high-risk. The 360-degree feedback platform with AI-powered theme extraction used in performance reviews: high-risk. The workforce planning tool that recommends restructuring based on predicted attrition: high-risk. The skills inference platform that automatically tags employees with capabilities based on activity data: high-risk.

The tools that are typically not high-risk are the ones doing administrative work that doesn’t affect consequential decisions — payroll automation, benefits administration, basic chatbots answering FAQ-level questions about company policy. The line is whether the AI is contributing to a decision that materially affects the worker.

For most enterprise HR functions, that line is crossed multiple times per day across multiple tools. The compliance picture is not a question of swapping out one vendor. It is a question of building a governance framework that covers the entire ecosystem.

What the Act Actually Requires of Deployers

The AI Act distinguishes between providers — the organisations that develop or place AI systems on the market — and deployers, the organisations that use them. For most employers, the relevant role is deployer. The provider obligations fall on the vendor that built the tool. But deployer obligations are substantial in their own right and cannot be discharged by relying on vendor compliance.

The core deployer obligations for high-risk AI under the Act include:

Use the system in accordance with the provider’s instructions. This sounds procedural, but it has real substance. Deviating from documented use cases — for example, using a tool designed for screening to make final hiring decisions without human review — can shift the deployer into provider territory and substantially expand liability.

Ensure human oversight. High-risk AI systems must be designed and used in a way that allows for effective human oversight. This means designating individuals with appropriate competence, training, authority, and support to oversee the system’s outputs. The oversight must be meaningful — the designated person must have actual capacity to intervene, modify, and override the system’s decisions, not merely sign off on outputs they have no realistic ability to evaluate.

Provide worker notification. Before deploying high-risk AI in the workplace, deployers must inform workers — and, where applicable, workers’ representatives — that they will be subject to the system. This obligation is reinforced by Article 26(7) and applicable national laws, which already require consultation with employee representative bodies before deploying high-risk AI. In jurisdictions like Belgium, with its Collective Bargaining Agreement No. 39 of 1983, this consultation obligation is independent of and pre-dates the AI Act.

Maintain logs. Deployers are required to retain automatically generated logs from high-risk AI systems for an appropriate period, with at least a six-month minimum retention baseline. The logs must be sufficient to allow analysis of system functioning and traceability of outputs.

Monitor for discrimination. Deployers must monitor the system for issues including discrimination and adverse impacts on protected groups. Where such issues are identified, the deployer must promptly suspend use and notify relevant parties.

Ensure data governance. The personal data processed by the AI system must be handled in accordance with applicable privacy law, including GDPR. The interaction between AI Act obligations and GDPR creates a layered compliance picture that requires HR, legal, and data protection functions to work together.

These obligations apply to the deployer regardless of vendor representations. A vendor’s claim that their tool is “AI Act compliant” addresses provider obligations; it does not transfer or eliminate deployer obligations. Treating vendor compliance assurances as a substitute for your own programme is a category of risk the Act is specifically structured to prevent.

Prohibited Practices Worth Naming Explicitly

Beyond high-risk classification, the Act prohibits certain AI practices outright. Two are directly relevant to HR.

Emotion recognition in the workplace. AI systems that infer emotions of natural persons in the workplace are prohibited, with narrow exceptions for medical or safety reasons. This is significant because some workplace tools — productivity platforms with sentiment analysis, video interview systems claiming to detect candidate engagement or stress, employee monitoring software that infers mood from typing patterns — operate in this territory. If your organisation deploys any tool whose marketing language references “emotion detection,” “sentiment analysis of workers,” or “engagement inference,” that tool requires immediate scrutiny.

Biometric categorisation based on sensitive attributes. AI systems that categorise individuals based on biometric data to deduce or infer race, political opinions, trade union membership, religious or philosophical beliefs, sex life, or sexual orientation are prohibited. Some workforce analytics tools and identity verification systems have features that, intentionally or not, cross into this territory.

The penalty ceiling for prohibited practices is the highest in the Act: up to €35 million or 7% of global annual turnover, whichever is higher. These took effect in February 2025 — they are not pending. Any HR tool currently in use that performs prohibited practices is currently non-compliant.

The Article 26(7) Obligation That Already Applies

One specific aspect of the AI Act deserves emphasis because of its current effect: Article 26(7) requires employers to inform workers and their representatives in a clear and comprehensible manner before deploying high-risk AI systems in the workplace.

This obligation, combined with applicable national labour laws in many EU member states, already requires consultation with works councils and employee representative bodies before deployment of qualifying systems. In Belgium, France, Germany, the Netherlands, and other jurisdictions with strong worker representation frameworks, the consultation requirement is operative now — it is not waiting for August 2026 or December 2027 to begin.

For HR teams that have already deployed AI tools that fall within the high-risk category in EU-based operations, the question is whether the consultation obligation was met at the point of deployment. If it wasn’t, retrospective remediation may be required. For tools currently in procurement or planning, the consultation obligation should be built into the project plan from the start — not added as an afterthought after technical implementation is complete.

What Organisations Should Be Doing Now

Given the regulatory uncertainty around the August 2026 deadline and the live obligations that already apply, the practical posture for HR and compliance leaders is straightforward.

Inventory. Build a complete inventory of AI systems currently in use across your HR stack, identifying which fall within high-risk categories under Annex III. Most organisations significantly underestimate the breadth of AI in their stack until they conduct this exercise.

Review provider documentation. For each high-risk system, request and review the technical documentation, conformity assessment, and CE marking documentation from the provider. Verify that the documented use cases match how your organisation is actually using the tool.

Establish deployer governance. For each high-risk system, document who is responsible for human oversight, what specific monitoring will be conducted, how worker notification will be handled, and how the six-month log retention requirement will be operationalised.

Address the EU-specific obligations. For any high-risk AI used to evaluate workers or candidates in EU member states, ensure consultation with employee representative bodies has been completed or is scheduled. This obligation does not wait for the high-risk system enforcement deadline.

Plan for both timelines. Build the compliance programme to be operational by August 2, 2026. If the Digital Omnibus is adopted and the deadline shifts to December 2, 2027, the work is not wasted — it positions the organisation well ahead of the revised deadline. If the Omnibus is not adopted in time, the original date applies and organisations relying on the deferral face genuine exposure.

AMS Inform provides background verification and workforce screening services across 160+ countries. For organisations reviewing their AI compliance frameworks in HR contexts, speak to our team at AMSinform.com.