There is a question that comes up constantly when the EU AI Act is discussed with HR and compliance leaders outside of Europe: does this actually apply to us?

The shorter version of the answer is: yes, more often than you think. The longer version requires understanding how the Act’s territorial scope is constructed, why it reaches companies that have no EU establishment, and what specifically triggers obligations for non-European employers.

For US, UK, Indian, Middle Eastern, and other non-EU organisations whose hiring or workforce activities touch Europe in any way — and that is a much larger group than most realise — the AI Act creates compliance obligations that are now squarely on the operational agenda for 2026.

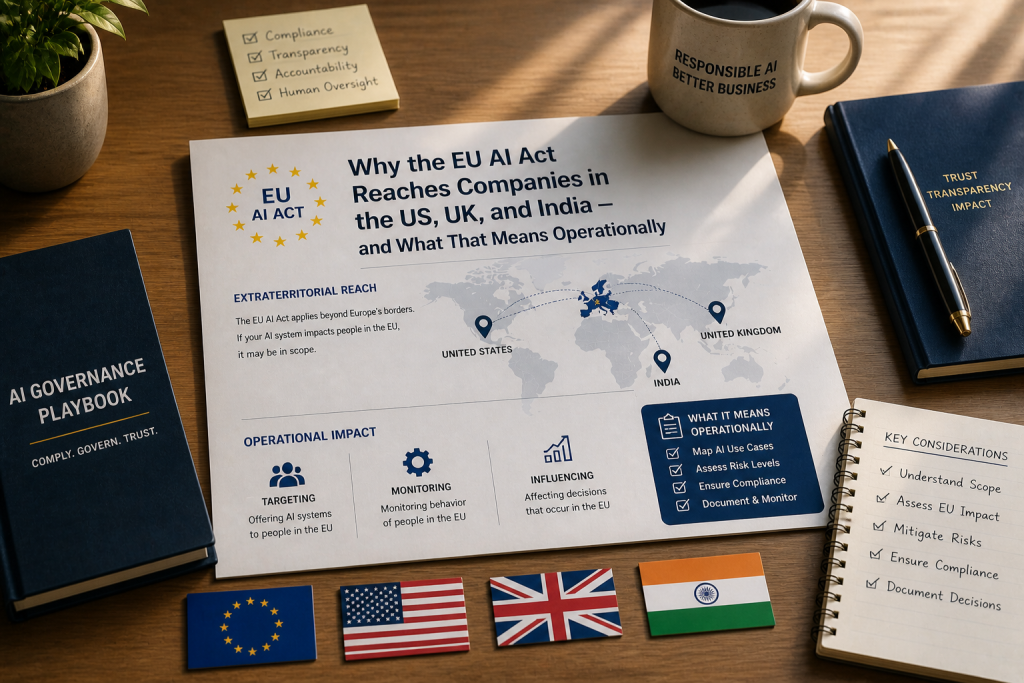

The Extraterritorial Architecture

The AI Act applies to three categories of actors: providers placing AI systems on the EU market or putting them into service in the EU; users (deployers) of AI systems located within the EU; and providers and deployers located outside the EU where the output of the AI system is used in the EU.

That third category is the one that catches non-European employers. The Act does not require a company to be headquartered in the EU, registered in the EU, or have any physical presence in the EU. It requires only that the output of the AI system is used in the Union.

For employment AI, “output used in the EU” has a relatively broad meaning. If an AI tool is used to screen candidates physically located in EU member states, or to evaluate workers performing work in the EU, or to generate decisions that affect individuals in the EU, the Act applies. The location of the company’s headquarters is irrelevant. The location of the AI system’s servers is irrelevant. What matters is where the output lands and whose rights it affects.

This is parallel to the GDPR’s territorial scope, and intentionally so. The EU has built a consistent regulatory architecture across data protection and AI governance: if your activities affect people in the Union, the Union’s rules apply.

The Practical Triggers for Non-EU Employers

For non-European employers, several common scenarios trigger AI Act obligations.

Hiring remote workers based in EU member states. If your organisation hires for fully remote roles and accepts applications from candidates physically located in Germany, France, the Netherlands, Spain, Italy, or any other EU member state, the AI tools used to screen, evaluate, or score those candidates fall within scope. This is the most common trigger for non-European employers and the one most often missed.

Operating EU subsidiaries or branches that share HR technology. Multinational employers running shared HR technology infrastructure across global operations frequently use the same AI-powered ATS, screening tools, and performance management systems for European entities as for the rest of the business. Those tools, when applied to European workers and candidates, are within scope. The fact that the same tool is “compliant” or “exempt” in another jurisdiction is irrelevant to the EU analysis.

Posting jobs into European markets. Targeted job advertising using AI-powered audience selection or programmatic placement, where the audience includes individuals in the EU, is covered. The Act explicitly includes targeted job advertising as a high-risk use case under Annex III.

Using global staffing agencies that hire into the EU. Companies using staffing agencies, employer-of-record services, or workforce platforms to access talent in the EU may find themselves with obligations even though they don’t directly manage the hiring infrastructure. The AI Act puts responsibility on whoever is deploying the system in the relevant context — and contractual allocation of responsibility between the agency and the client doesn’t override the regulatory framework.

Cross-border services involving European workers. Indian IT services firms, UK consultancies, US tech companies running global delivery models, Gulf-based contractors with European operations — all of these models commonly involve AI-powered workforce decisions affecting European workers. The Act reaches all of them.

What “High-Risk” Actually Means for the Compliance Workload

For non-European employers caught by extraterritorial application, the deployer obligations under the AI Act are substantively the same as they are for EU-based employers. The key obligations were covered in this week’s first blog: human oversight, worker notification, log retention, monitoring for discrimination, data governance.

But there are a few wrinkles specific to the non-European deployer position worth highlighting.

The provider relationship complication. If your AI tool was built by a US, Indian, or other non-European vendor, the vendor’s own AI Act compliance status may not be straightforward. Some non-European AI providers have not yet completed the conformity assessments and CE marking processes required to legally place high-risk AI systems on the EU market. Deployers using non-compliant high-risk systems on EU outputs may themselves be in violation, even if the technical responsibility for the conformity assessment lies with the provider.

The practical implication: deployers should verify provider compliance documentation, not assume it. Contractual representations are useful but not dispositive — your obligations as deployer are direct, not indirect.

The fundamental rights impact assessment. Certain deployers, particularly those deploying high-risk AI in employment contexts in the public sector or covered by specific provisions, are required to conduct a fundamental rights impact assessment before deployment. The scope of this requirement is currently being clarified through Commission guidance, but for HR contexts, the safer assumption is to conduct a documented assessment of how the AI system may affect fundamental rights of workers and candidates.

The interaction with GDPR. Most non-European employers using AI for workforce decisions about EU individuals are already subject to GDPR. The AI Act adds to, rather than replaces, GDPR obligations. Article 22 of the GDPR — which restricts solely automated decisions producing legal effects or similarly significant effects — already creates obligations around AI-driven hiring decisions. The AI Act adds the technical, governance, and oversight requirements on top of GDPR’s existing constraints.

For HR and legal teams managing EU-affecting AI activities, this means the compliance work isn’t a single project. It is a coordinated programme covering data protection, AI governance, and workforce regulation.

The Enforcement Picture

The AI Act has moved from theoretical to operationally enforceable over the past year, and continues to do so.

In January 2026, Finland became the first member state to formally confer enforcement powers on its market surveillance authority under Article 99 of the AI Act, rendering those powers fully operational. Other member states are following — each EU country must designate a competent authority to enforce the Act, and the architecture is being built out across 2026.

The penalty ceilings are deliberately calibrated to be material. For violations of high-risk system obligations, fines can reach €15 million or 3% of global annual turnover, whichever is higher. For prohibited AI practices — emotion recognition in the workplace, biometric categorisation based on sensitive traits, and others — the ceiling rises to €35 million or 7%. Providing incorrect or misleading information to regulators carries a separate penalty of up to €7.5 million or 1%.

The “global annual turnover” element is significant. These are not penalties tied to European revenue — they are calculated against worldwide revenue. For a US technology company, a UK consultancy, or an Indian IT services firm with significant global revenue, 3% to 7% is a number that gets attention at board level.

Enforcement will not be uniform across the EU. The decentralised model means national authorities will set their own priorities, develop their own interpretive approaches, and pursue cases that fit their strategic agenda. Some member states will likely be more aggressive than others on employment AI specifically. Organisations operating across multiple member states should expect different enforcement postures and plan accordingly.

The Digital Omnibus Uncertainty

Layered on top of the substantive picture is the procedural uncertainty around the Digital Omnibus package, proposed by the European Commission on November 19, 2025.

The Omnibus package, if adopted in its proposed form, would defer the high-risk system compliance deadline from August 2, 2026 to December 2, 2027. It would also condition the entry into force on the availability of harmonised technical standards and compliance tools developed by European standardisation bodies — meaning the deadline could shift further if standards are not finalised in time.

The Omnibus is making its way through the EU’s legislative process. As of the writing of this piece, the second political trilogue between the European Parliament, the Council of the EU, and the European Commission was held on April 28, 2026 and ended without agreement. A further trilogue was scheduled for May 13, 2026. If the Omnibus is not formally adopted before August 2, 2026, the original AI Act provisions — including the high-risk obligations and their current timeline — apply from that date as written.

For HR and compliance leaders, this creates a genuine planning challenge. The conservative posture is to plan for the original August 2026 deadline. The risk-based posture is to plan for it as well — because if the Omnibus is not adopted in time, organisations relying on the deferral will be in active violation when the original deadline passes, with no opportunity to remediate retrospectively.

The recommendation from major law firms tracking this — DLA Piper, Crowell, Ogletree, and others — is consistent: continue compliance preparations against the original 2026 deadline. If the Omnibus passes, the work is not wasted; it positions the organisation comfortably ahead. If it doesn’t, the organisation is protected.

Building the Compliance Programme: A Pragmatic Framework

For non-European employers facing this complexity, the pragmatic compliance framework looks like this.

Scope mapping. Identify all AI tools currently in use across your HR and workforce stack. For each, determine whether outputs of the tool affect workers or candidates in the EU. The scope is broader than most organisations expect — applicants from the EU applying to remote roles count, even if the role is not described as European.

Classification. For each in-scope tool, determine whether it falls within an Annex III high-risk category. Most enterprise HR AI does. Some narrow categories of HR automation may be exempt, but the default assumption should be that employment AI is high-risk until specifically determined otherwise.

Provider verification. For each high-risk tool, request and document the provider’s compliance status — conformity assessment, CE marking, technical documentation. Some non-European providers will not have completed this process. Tools without provider compliance should be flagged for replacement, restriction, or escalation.

Deployer programme. Build the deployer compliance programme: human oversight roles, monitoring procedures, worker notification protocols, log retention infrastructure. Document everything.

Worker representative consultation. For tools deployed in EU contexts, ensure consultation obligations under Article 26(7) and applicable national law are met. This is a now-obligation, not a future one.

Vendor contract review. Review and where necessary update vendor contracts to address AI Act compliance, indemnification for vendor failures, audit rights, and ongoing compliance maintenance. Most existing HR technology contracts are inadequate on these points.

Documentation. Document the entire compliance programme. The AI Act’s enforcement model relies heavily on documented evidence of compliance activities. Organisations that can produce contemporaneous, organised compliance documentation will be in a fundamentally stronger position than those who can’t, regardless of any individual technical question.

The companies that navigate this period well will not be the ones who guessed correctly about the deadline. They will be the ones who built genuine compliance programmes that work regardless of the timeline. Given the breadth of obligations, the breadth of in-scope tools, and the size of the potential penalties, that is the only defensible operational posture for 2026.

AMS Inform provides background verification and workforce screening services across 160+ countries. For organisations operating across borders and managing AI compliance in HR contexts, speak to our team at AMSinform.com.